The End of the World at the DoubleTree

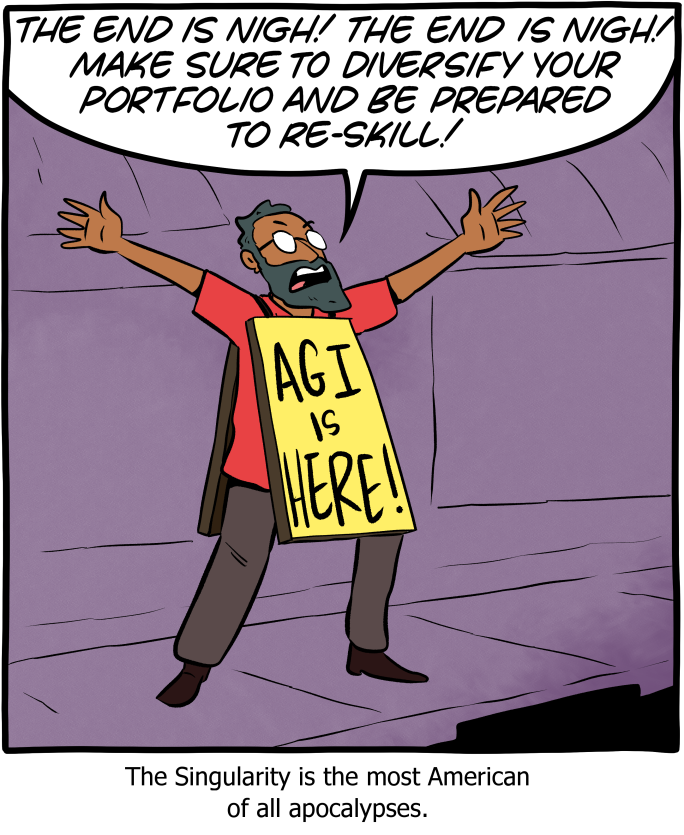

The end of the world used to be an event. Something to fear, to prevent, and if all else fails, to witness. Today, it’s a statistic. It’s a footnote. A rhetorical device used during a talk at a mid-tier conference at an airport hotel.

If the end of the world had to happen, it might as well be at the DoubleTree by Hilton, near Seattle-Tacoma Airport. A ballroom, with a patterned carpet. Unflattering conference lighting. Standing at the front of the room, the keynote speaker: Dr. Craig A. Kaplan of iQ Company, and he has a question:

“What do you think the probability is that AI causes human extinction? Raise your hand if you think it’s 10%. Raise your hand if you think it’s 50%.

Almost everybody agrees it’s above 20%. About half think it’s 50% or higher. That seems to track with Kaplan’s data. It’s not the first time he’s asked this question. He notes that at a 1% chance of extinction, the expected loss is 82 million lives, and at 10% it’s 820 million, given a population of 8.2 billion. So, by the same math, if the researchers in the room could collectively reduce p(doom)1, by even one-tenth of a percent, the expected value of that effort would be 8.2 million lives saved.

There’s not much of a reaction. He advances to the next slide. The Six Components You Need For Safe Superintelligence.

This comes from “AIM 2025 Superintelligence Keynote: What’s Your p(doom)?” given at the DoubleTree in Seattle on June 9th 2025. It was uploaded to YouTube a few days later. As of this writing, about 10 months later, it’s up to 208 views.

Just 208 people have watched the video where a room full of AI researchers raised their hands to indicate they believed there was a coin-flip chance that their field would produce human extinction.

I need to be careful here. I’m not saying Craig Kaplan is cynical, or endemic of the malaise towards extinction. I have no reason to believe he’s anything but what he appears to be: an AI researcher who thinks seriously about AI risk, believes those risks to be genuinely civilizational, and has developed a product to address those stakes.

He’s not trying to manipulate the data. 8.2 million lives is an actual calculation, not a con. He means it.

The Commodification of Concern

The term p(doom) began as rationalist shorthand for the probability that AI leads to existential catastrophe, then spilled into mainstream coverage. In 2023, notable figures Geoffrey Hinton and Yoshua Bengio started giving explicit percentage‑style estimates of AI catastrophe risk. One ABC interview has Bengio put his own odds of a catastrophic outcome at “around 20 per cent.”

And in 2025, it became a conference format. Ask the room, count the hands. Advance to the next slide.

Somewhere between “technical shorthand that forces epistemic precision” and “an interactive opener for a product keynote”, something happened. I’m not sure what exactly. Call it a casualty of attention economics, or the commodification of concern. It’s not that the term became less serious, or the concern became less genuine. If anything, the concept has become increasingly needed and worthy of discussion. But it’s a hook. It’s content. It’s a way to get your audiences attention before you tell them about the six design components.

This is what happens when professional incentives converge with genuine beliefs. They operate together, simultaneously, indistinguishably, and it doesn’t take long before those involved can’t tell them apart.

Adolescence in Davos

Several months later at a slightly bigger and more well-known conference in Davos, Dario Amodei published a 20,000 word essay titled “The Adolescence of Technology.” Amodei is the CEO of Anthropic, the company that was founded on the idea of prioritizing AI safety over anything else. It’s flagship product, Claude, has been called “the most powerful and popular AI system for global business” by Fortune Magazine.

It’s a well-written, passionate essay. But as Fortune noted, it’s also simultaneously “an impassioned prophecy and a novella-length marketing message.”

Both descriptions are accurate, and they aren’t mutually exclusive.

The essay focuses on five categories of existential risk from AI. These are:

Autonomy risks

Misuse for destruction

Misuse for seizing power

Economic disruption

Indirect effects

Each one of these presents an endless wormhole of philosophical debate and rationalizations. Each one on its own could make up an entire Ph.D. But for our purposes here, I’m going to focus on one: misuse for seizing power.

In this, Amodei lays out the most likely actors to precipitate this risk. He posits that this can be achieved a number of ways, and tells us, explicitly, who he is most worried about exercising this power and exacerbating the risk. Of the four he lists, 3 of them are state level actors (the CCP, Democracies with competitive AI tools, Non-Democratic, Non-China countries with large data centres). But it’s the last category that is the most relevant to this essay:

He writes,

“It is somewhat awkward to say this as the CEO of an AI company, but I think the next tier of risk is actually AI companies themselves.”

Awkward, indeed.

Amodei is describing a situation where the company he runs, the company he founded, the one that’s grown its revenue run rate from about $4 billion to over $9 billion in roughly six months, is a civilizational risk.

He’s not talking about accidentally hitting reply-all on a sensitive email. He’s not talking about calling your teacher “Mom” accidentally nor is he talking about telling your server, “you too,” after they tell you to enjoy your meal.

Dario Amodei is describing the possibility that his company is in the same risk category as authoritarian governments seeking to use superintelligence to seize permanent global control.

Awkward doesn’t begin to cover it.

Extinction as a Growth Strategy

Both Kaplan and Amodei are being earnest. They believe what they’re saying, and they’re building the product anyway. Craig Kaplan, at the podium of the ballroom at the DoubleTree Hilton by the Seattle Airport genuinely believes that the probability of human extinction is somewhere in the range his audience indicated. Dario Amodei, sitting in his luxe Swiss accommodations at Davos, genuinely believes his company poses a civilizational risk.

They both believe it, and use it as an informal hand-raise poll to kick off a presentation. They believe it, and the word of choice is… awkward.

The language of existential risk has become institutional currency. It’s not propaganda. It’s not a lie. The idea of genuine belief and professional self-interest operating in the same body, in the same sentence, becomes load-bearing for both. The belief makes the product credible. The product funds the belief. The extinction risk, the p(doom), gets the rooms attention. That attention sells the six components. The essay acts as moral high-ground, and, as Fortune puts it, brand positioning.

They believe it, and they’re building it anyway. That belief alone, in a different industry, would be grounds to hit the brakes. But it’s a growth strategy. What used to be a constraint on action is now a pre-requisite. Existential risk unlocks funding, attracts safety-conscious talent, reassures regulators, and differentiates products in a crowded market. It stops being a warning and starts becoming the price of entry.

The end of existence. Existential risk. Civilization That attention sells the six components. The essay acts as moral high-ground, and, as Fortune puts it, brand positioning.

They believe it, and they’re building it anyway. That belief alone, in a different industry, would be grounds to hit the brakes. But it’s a growth strategy. What used to be a constraint on action is now a pre-requisite. Existential risk unlocks funding, attracts safety-conscious talent, reassures regulators, and differentiates products in a crowded market. It stops being a warning and starts becoming the price of entry.

The end of existence. Existential risk. Civilization ending doom is a possibility. It’s happening. And there aren’t any villains to point to.

They believe it, and they’re building it anyway. That rewires the whole conversation around risk. If extinction talk is an entry fee, table stakes, for being taken seriously, regulators begin to see it as a sign of sophistication instead of an alarm. It gets quoted as evidence of responsibility. It gets absorbed as background noise, and the language that was supposed to trigger the breaks morphs into a marketing asset. That’s what concern looks like when it’s been commodified.

The Primer in the Signal

Dario Amodei begins his essay by describing a scene from Contact, the filmed adaptation of Carl Sagan’s novel. He uses is it as a signal for the hope he carries that humanity will be able to overcome this challenge.

In Contact, the key moment of realization comes when the researchers realize that the message’s own structure contains the instructions for how to interpret it. They’re inseparable.

Jodie Foster in Contact (1997)

Here, we’re seeing that the warning and the business model are inseparable as well. The key that legitimizes the product (existential risk) is embedded in the same discourse that sells you the product.

Amodei is aware of this. That’s why his essay, at 20,000 words, offers a very thorough description of the problem, but only hints at solutions that are compatible with Anthropic’s need to continue to deliver frontier models. Anthropic does indeed take this seriously though. The recent non-release-cum-publicity stunt of Mythos shows this. But that’s for a different essay.

Back to Seattle for a moment. The video of Dr. Kaplan’s lecture has 208 views. At least two of those are mine. But those numbers prove something. Moments like that, a researcher casually using the probability of extinction as an icebreaker, they’re happening constantly. In rooms nobody is watching. In service of products that may or may not address the thing they claim to address. The DoubleTree and Davos aren’t different events, just different scales. It’s not a Davos problem. It’s not a Dario Amodei or Sam Altman or Elon Musk problem. And it’s not Dr. Kaplan’s problem. It’s the texture of the industry. It’s genuine belief and institutional interest being fused together in a way nobody involved can fully distinguish.

And there’s the more disturbing possibility. Not that these guys don’t mean it.

That they do.

1 That’s the real, industry term for this. The probability of doom. You can’t make this stuff up.